How We Cut Translation Time from 24 Hours to 2 Minutes Across 20 Languages

The Problem

A global crypto news site was publishing breaking news in English, but they had a serious bottleneck: getting that content translated into 26 other languages.

They had local editorial teams for each language, but these teams were overwhelmed. Every English article needed manual translation, which meant:

- 1-day turnaround from English publication to local language publication

- Missed opportunities – crypto news moves fast; day-old news is stale news

- Burnt-out editors spending their time translating instead of creating local content

- Inconsistent coverage across different language sites

The company wanted to move faster, but they didn't want to lose the human touch. Local editors understood cultural nuances, regional terminology, and when a direct translation just wouldn't land right. They needed speed and quality.

The Solution

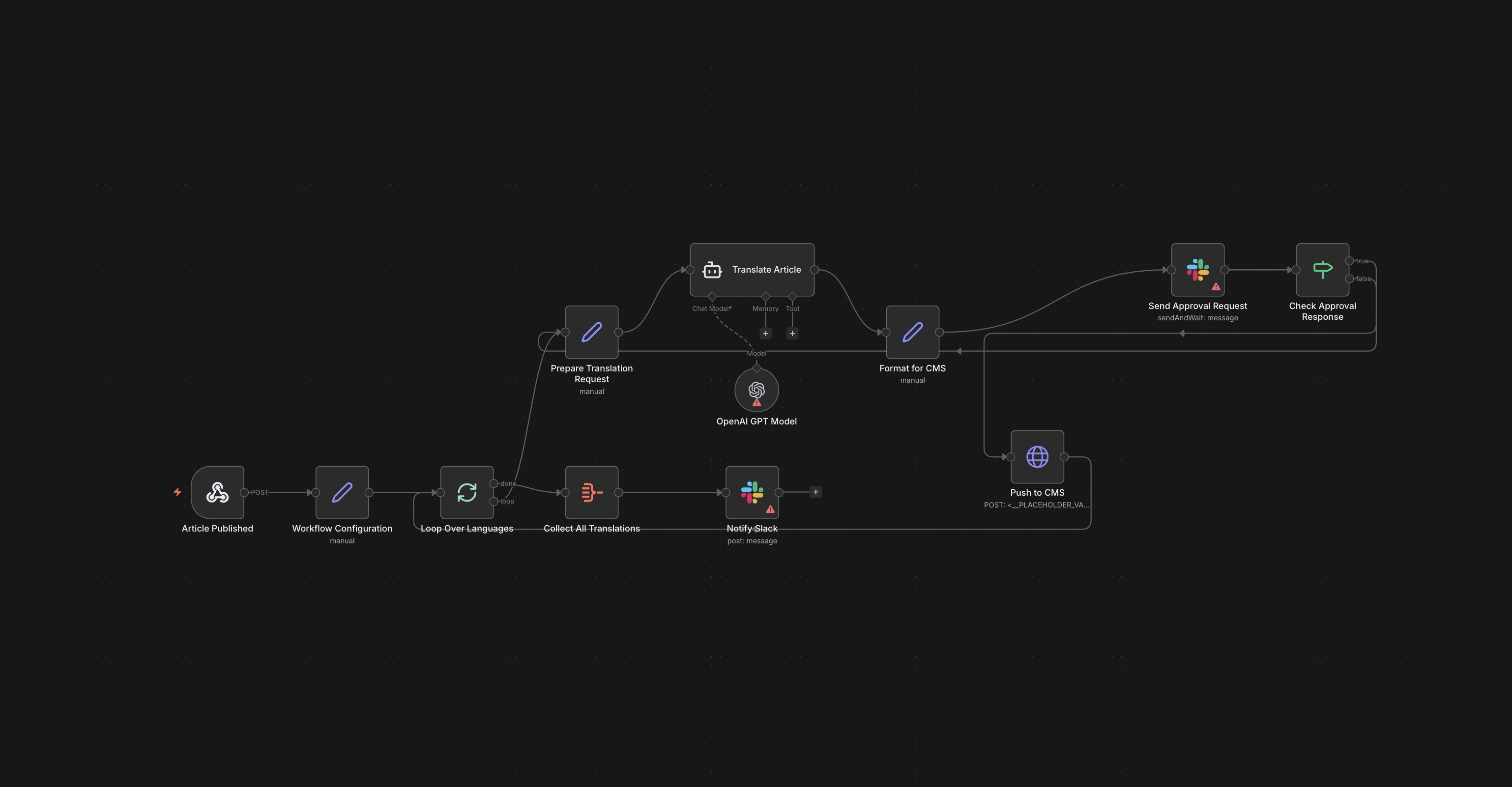

We built an AI workflow that does the heavy lifting while keeping humans in the driver's seat.

How It Works

English article gets published

The moment an article goes live in the CMS, it triggers the workflow automatically.

AI translates to 20 languages in parallel

Using n8n connected to Gemini, the AI translates the article into all 20 languages simultaneously. This happens in about 2 minutes.

Local editors get pinged in Slack

Each language team gets a notification: "New article ready for review." The translated draft is already in their CMS.

Human checkpoint – this is where the magic happens

Editors can approve and publish, edit directly, or send back to the loop for regeneration.

Monitoring and improvement

Track failures and quality issues. When editors flag problems, we refine prompts and improve the system.

Why This Approach Works

It's not about replacing humans – it's about freeing them up.

Before: Editors spent hours translating every article manually.

After: Editors spend minutes reviewing and refining AI-generated translations.

The workflow respects what humans are good at (nuance, cultural context, editorial judgment) and lets AI handle what it's good at (speed, consistency, parallel processing).

We also built in flexibility. We tested Claude, ChatGPT, and Gemini for translation quality and cost. Gemini performed best for this specific use case, but the workflow isn't locked in – if a better model comes along, we can swap it out.

Technical Details

The stack:

Challenges we solved:

- Volume spikes: Sometimes multiple articles publish minutes apart. We designed the workflow to queue them properly so nothing gets dropped or duplicated.

- Quality control: When editors flagged poor translations, we didn't just fix that one article – we refined the prompts to improve future translations across the board.

- Failure handling: If something breaks (API timeout, CMS connection issue), the system alerts the team and retries automatically.

The Impact

While exact metrics are confidential, here's what we achieved:

- Higher content velocity across all local domains

- Increased traffic and engagement on non-English sites

- Strong editor satisfaction – they could focus on creating value instead of manual translation work

The 2-minute turnaround meant breaking news could go global immediately, not the next day. For a crypto news site where timing is everything, this was a game-changer.

When This Approach Makes Sense

This workflow works well when:

- You have high-volume content that needs to scale across languages

- You have local experts who can review and refine

- Speed matters – you're losing business value with delays

- You're willing to iterate and improve based on feedback

It doesn't work when:

- Content is highly creative or poetic (AI struggles with nuance)

- You have zero human review capacity (quality will suffer)

- Volume is low enough that manual translation is sustainable

What We Learned

Human-in-the-loop isn't optional – it's the whole point.

The workflow only works because editors trust it enough to use it, but they also have the power to override it. That trust came from giving them control.

AI models aren't one-size-fits-all.

We tested multiple models because translation quality varies wildly depending on language pairs and content type. What works for Spanish might not work for Vietnamese.

Monitoring is everything.

If you can't see where things are breaking or where quality is slipping, you can't improve. We built in feedback loops from day one.

The real win wasn't the technology – it was designing a system that made people's work better, not obsolete.

Want to explore if an AI workflow could work for your team?

Let's talk about what you're trying to solve, not what tools you think you need.

Get in touch